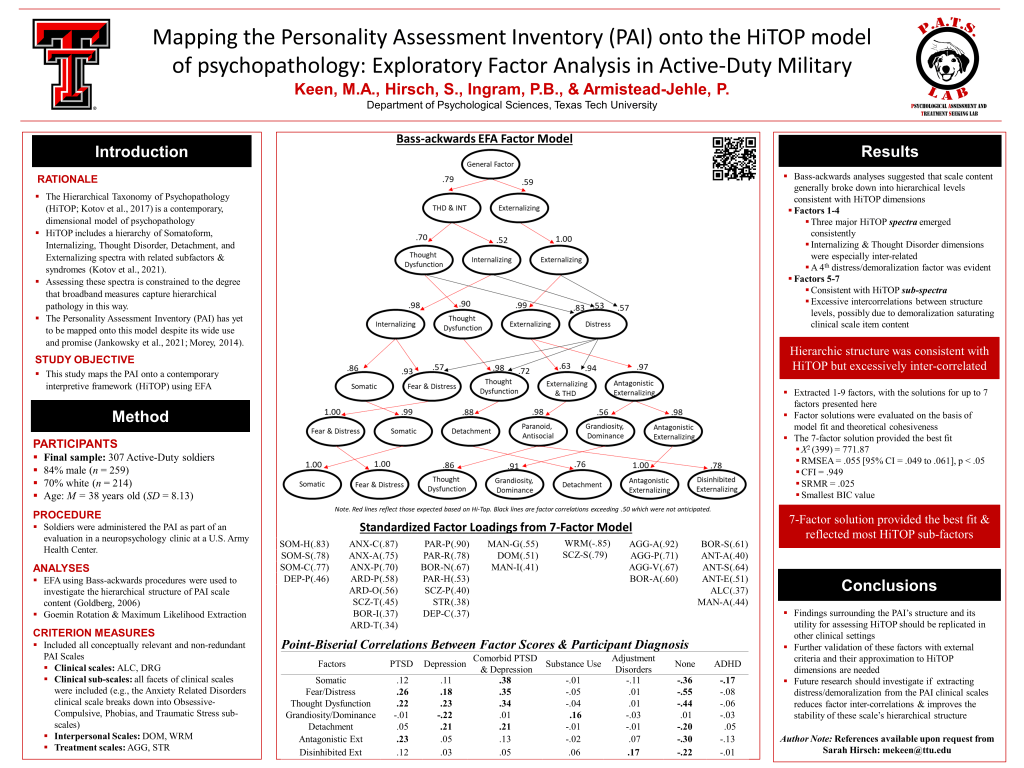

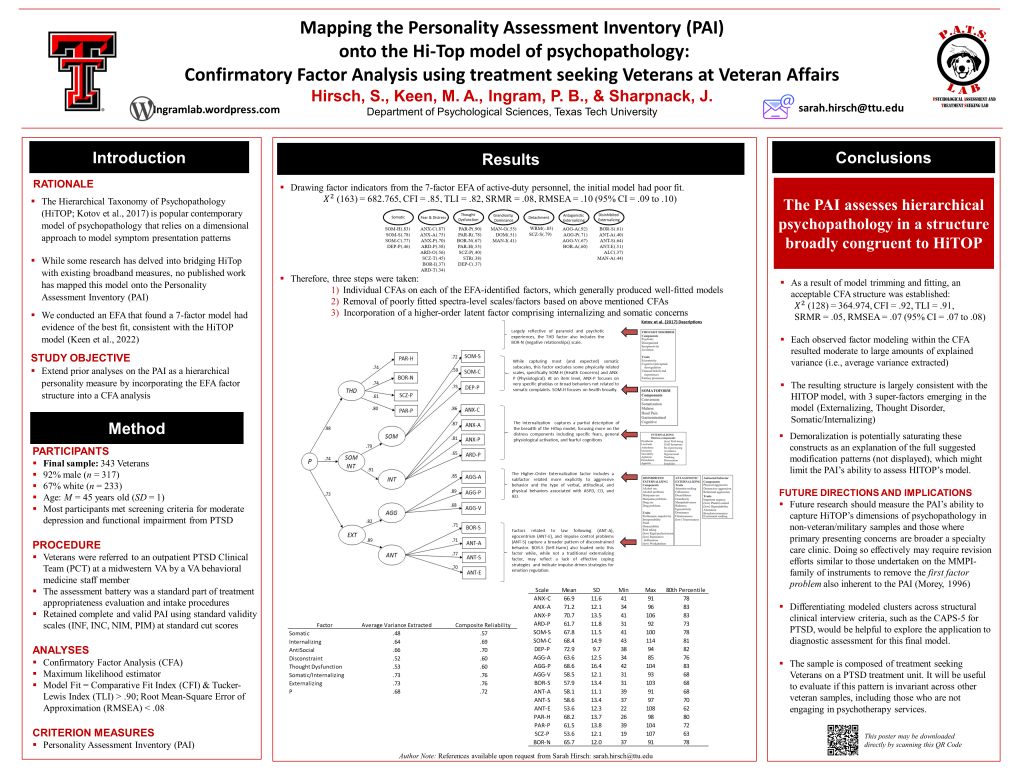

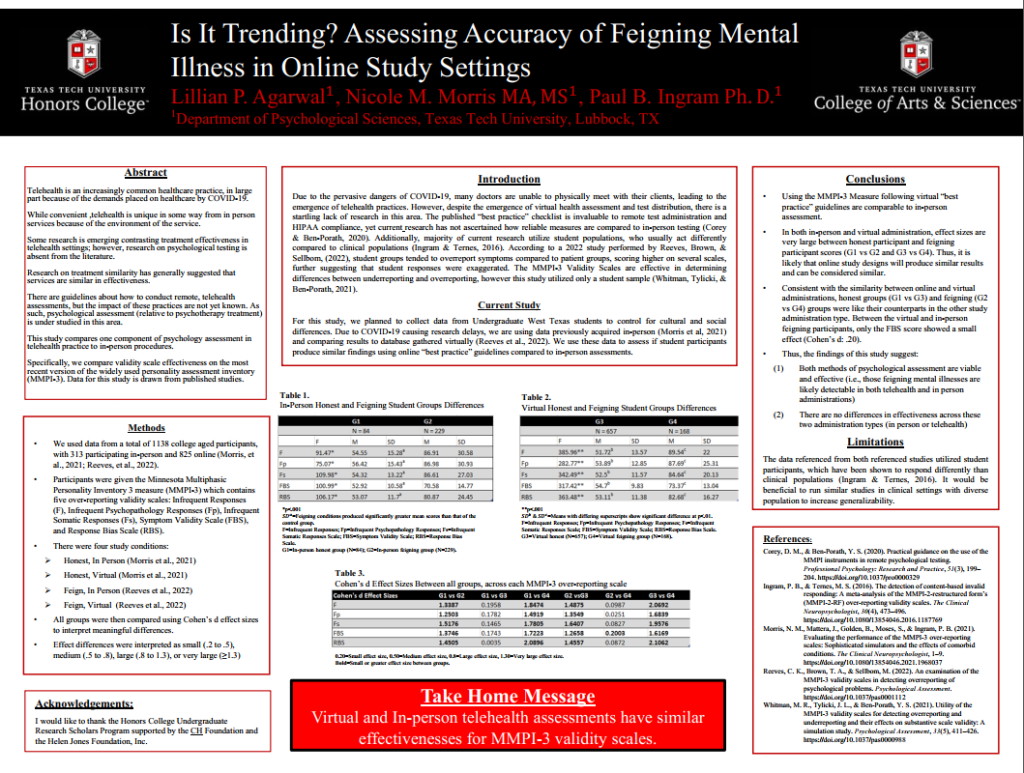

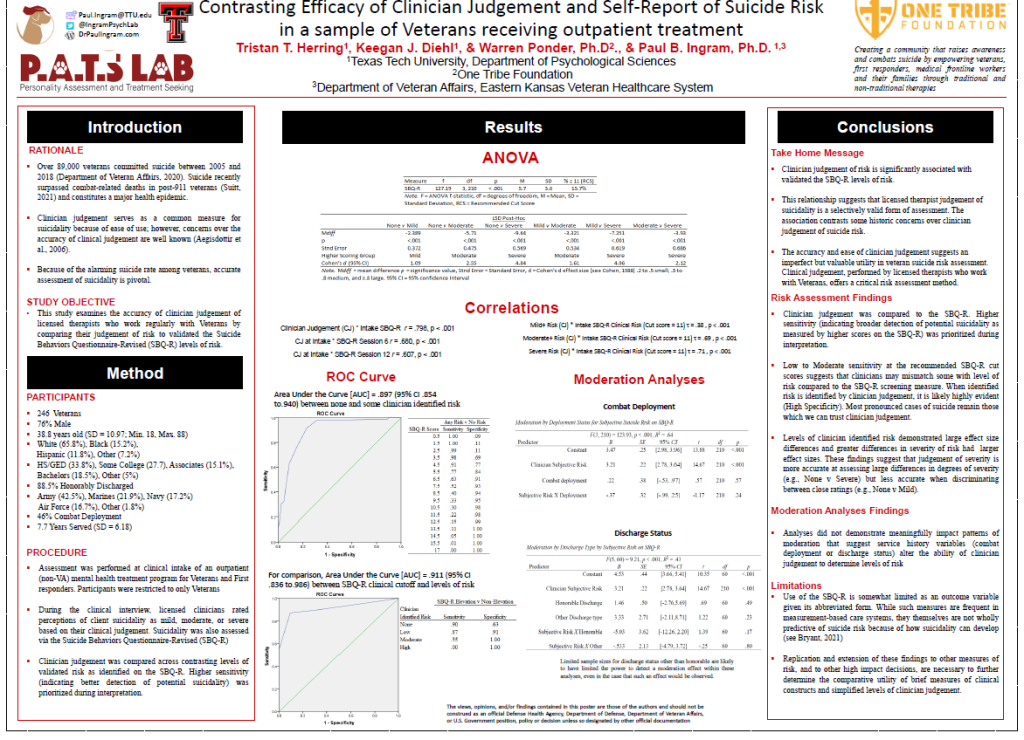

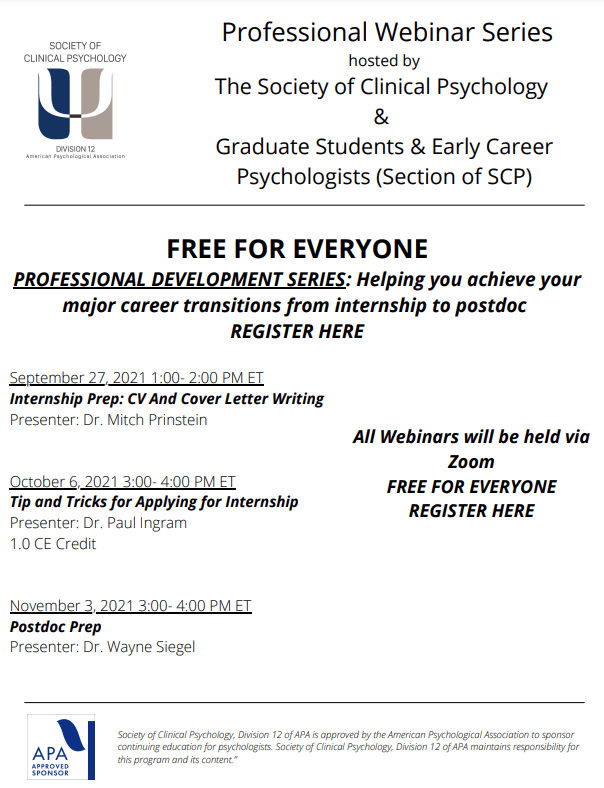

Texas Tech and the PATS lab really brought some awesome stuff this year! We had a number of projects presented by current grad and undergrad members, as well as having a big group attending. I can’t be more thrilled with the work of everyone. I’m going to touch on the projects we presented and add some pictures of us having a great time all around. Citations link to presentations for download.

An item-level approach to general over/under reporting looks to perform better than the content, theory-based focused (psych, somatic, cognitive) MMPI-3 over-reporting scales and the under-reporting scales.

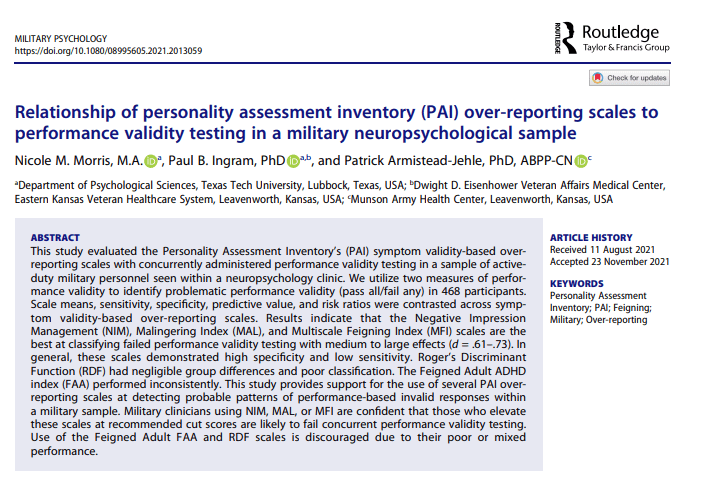

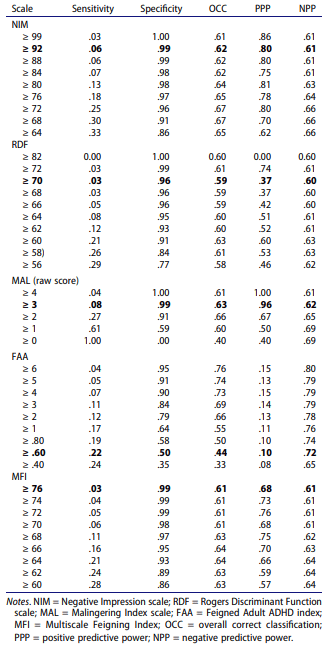

We took a general approach to over-reporting, relying on the assumption that feigning attempts are less specific/more general than some models of symptom over-reporting (see Rogers & Bender). Scale level means (see Gaines et al., 2013) perform equal/better than item-level over-reporting scales of the MMPI-3. We’ve subsequently replicated this approach with a few clinical samples and found equal to better performance, including on several PVTs (Ingram et al., 2020 reanalysis of active duty personnel in a neuropsych evaluation sample)

Pilot data demonstrated that the MMPI-3 can predict positive psych intervention outcomes and that the symptom scales are negatively associated with strength traits. This may suggest low score interpretations tied to strengths, although this approach ignores the orthogonal nature of mental health (see Keyes, 2002)

Ashlinn really rocked! As her first poster, she had to learn all the ROC/classification statistics. Just awesome. Findings suggest that the restricted item content will make prediction of any specific pattern of eating difficult at the T75 cut score (requiring 2 endorsements). Given this, moving the EAT scale to a critical scale with endorsed items listed at the end of the report may be wise. Next steps you ask? Ashlinn’s next year will be screening individuals in diagnostic groups to grow her samples and examine EAT for differentiation of those diagnostically screened groups.

Morris, N.M., Patterson, T.P. , Ingram, P.B., & Cole, B.P. (June, 2022). MMPI-3 and Gender: The moderating role of masculine identity on item endorsement. Blitz talk presented at the 2022 Annual MMPI Research Symposium, Minneapolis, MN.

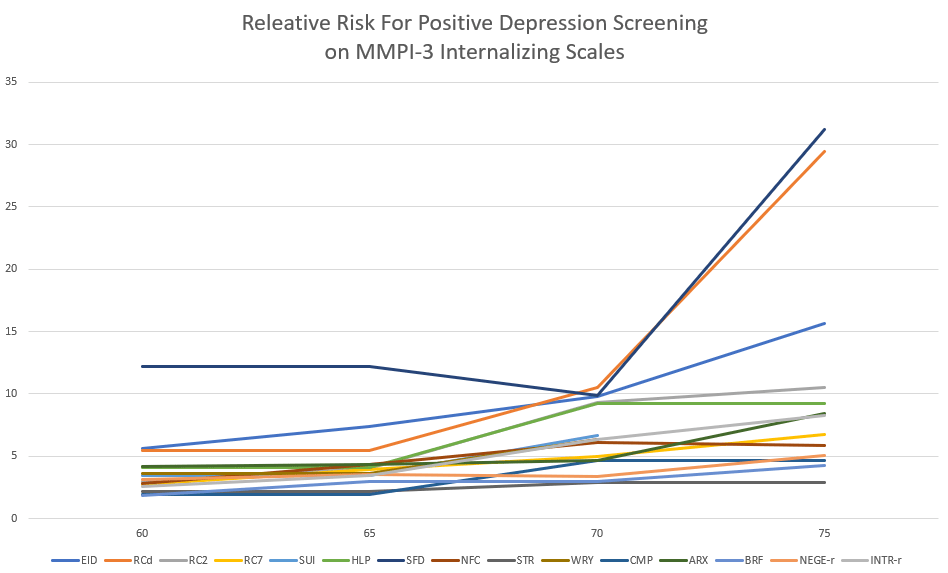

Nicole’s focus on expanding contextual interpretation of the MMPI-3 really leads the diversity focus needed in assessment. Gender norms predict 4-13% variance beyond symptoms and sex for most (11/16) MMPI-3 internalizing scales. Our next steps are going to use LCA to look at clusters of gender norms (masculine and feminine) with MMPI-3 scale presentations.

And now for some pictures of all the lab fun!

Besides the lab fun, I also got a chance to catch up with my old Western Carolina advisor David McCord with his students. MMPI has one of the best communities and I love being part of it.