New Paper: Treatment outcomes in a residential substance use sample

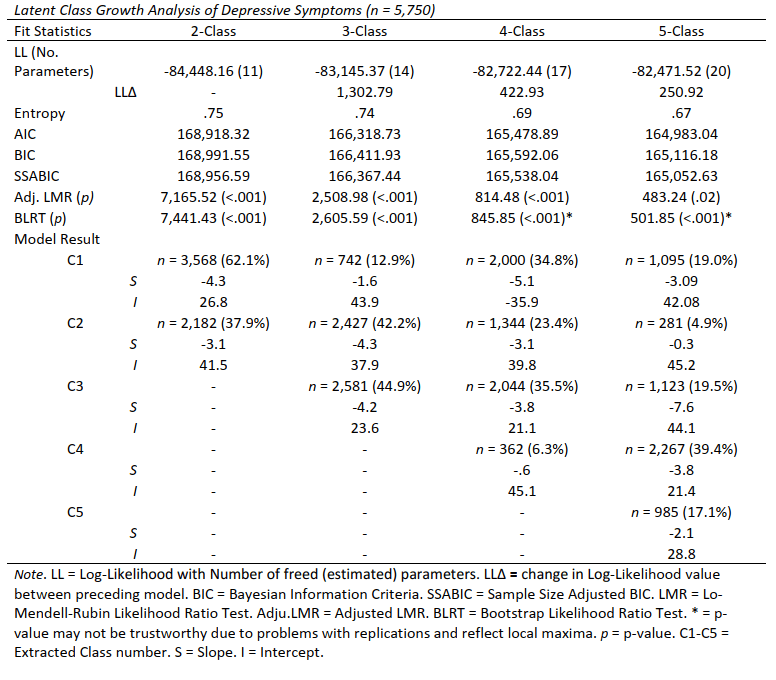

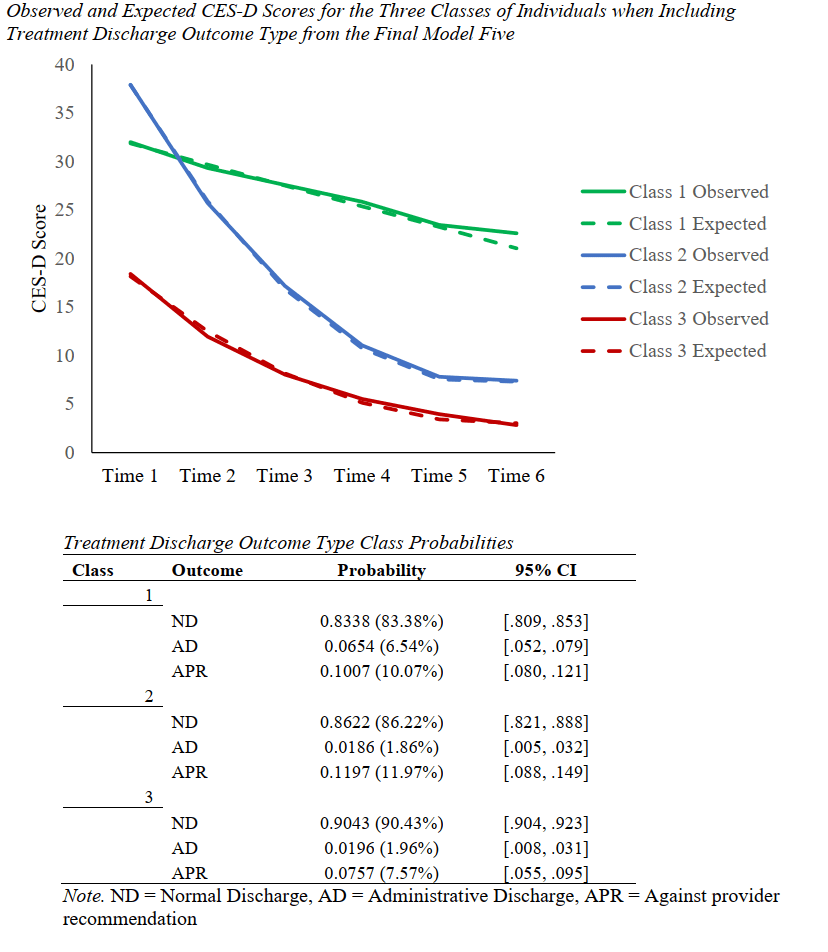

I’m thrilled to have gotten the e-mail from Nicole Morris this morning letting me know that our paper was accepted into Addictive Behaviors. The study uses a large, multi-site sample of individuals receiving residential substance use treatment and asks if the CES-D (a common depressive measure) is useful for predicting treatment outcomes. The results are a resounding success!

Download the paper HERE

Some brief results are presented below.

- The CES-D has 3 factors in residential substance use populations, but scores represents largely the negative mood/anhedonia experiences of depression because of how many items load on that factor

- CES-D scores are (unsurprisingly) very high in those undergoing substance use, most exceed a screening cut score for depression

- Higher CES-D scores result in worse discharge outcomes (less normal, more administrative and AMA – but there is variation in this among symptom) and there tend to be 3 distinct paths that scores follow over time with separate intercepts (score) and slopes (rate of change)

- Drug of choice and gender don’t play a role in the CES-D’s predictive utility in this population

Applying for graduate school?

Applying to graduate school can be super confusing and stressful. I wanted to try and give some insight into what you can do along the way to maximize your potential for admission into a psychology doctoral program, and how I (and many others) view applications and the process in general. There is a lot more that I could say, but I wanted to give some sense of my perspective about applicants and their “fit” with me as a mentor.

A few take-aways

- Consider funding – being debt free is important

- Make sure the program has good training outcomes

- Make sure your mentor is someone you want to be in a professional relationship with for several years (how you are treated matters)

- Be flexible in your pathways to career goals, and in your geographic openness

- Be proactive in your planning – being a lab self-starter is a HUGE thing

- This is an extremely competitive process, so it is possible that you are a good candidate and that you don’t get accepted.

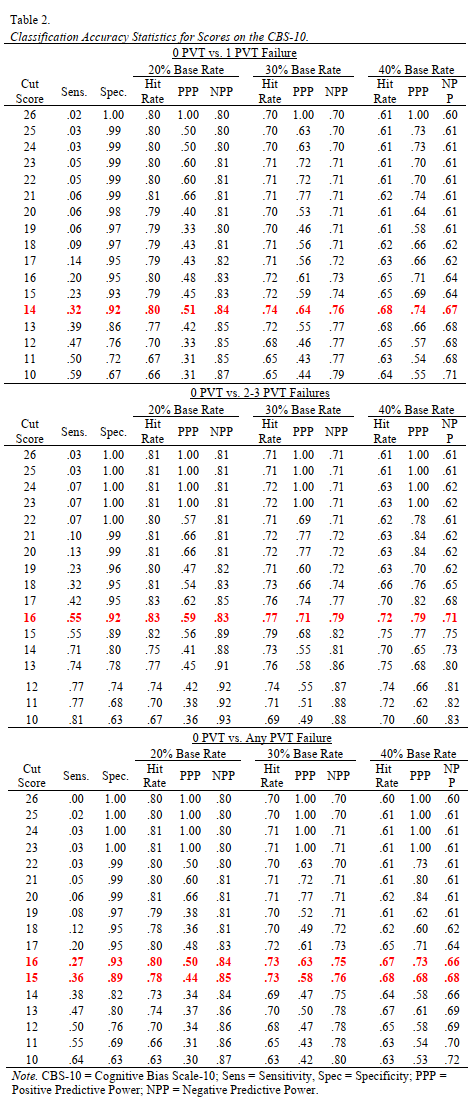

Doing neuropsychology evaluations with the PAI? The CBS scale is an effective validity scale for military populations

Although the PAI is similarly trained and used as the MMPI (Wright et al., 2017; Ingram et al., 2020), it’s validity scales don’t have the same level of study or rate of detection for invalid responding within military populations (see Brittney’s thesis as an example). In fact, even when new validity scales are developed they rarely see themselves assessed beyond this initial validation

Gaasedelen et al (2019) developed the Cognitive Bias Scale (CBS) as a comparative measure to the MMPI-2-RF’s highly effective Response Bias Scale (RBS). The RBS employed criterion coding using PVT failure to identify potentially useful items and as a result of these methods some consider it the most reliable MMPI-2-RF validity scale given its blended SVT/PVT approach (Ingram & Ternes, 2016). The new CBS scale used the same methods to develop a scale assessing feigned cognitive symptoms on the PAI, a domain of symptoms that was previously overlooked. In our most recent study, we replicated their validation in an active duty military sample to see if their suggested cut scores and observations of effectiveness were generalizable.

What did we find?

- Large effect sizes differentiated scores on the CBS between those passing PVTs and failing PVTs

- The CBS had similarly high specificity and low sensitivity as the initial CBS, and comparable to those observed in the RBS for the MMPI-2-RF

- In general, a cut score of 16 is recommended to maximize specificity while also keeping moderate sensitivity

Check out the table below for classification accuracy information (red bolded text are recommended values for each comparison [>.9 spec, ~.3 Sens]):

Brittney defended her thesis: MMPI-2-RF and the PAI in differentiating PTSD and Depressive Disorders

Brittney did absolutely awesome yesterday and defended her thesis. There is too much to say about this project and I’m thrilled about how it went, and how it turned out. We’re starting to transform the project into papers next and anticipate the products Brittney writes as being very useful additions to the literature surrounding differential diagnosis in active duty personnel, greatly expanding available literature with these two instruments.

If you want to catch a glimpse of how she did, here is her presentation (about 27 minutes and includes only her presentation/summary of the findings): Check it out!

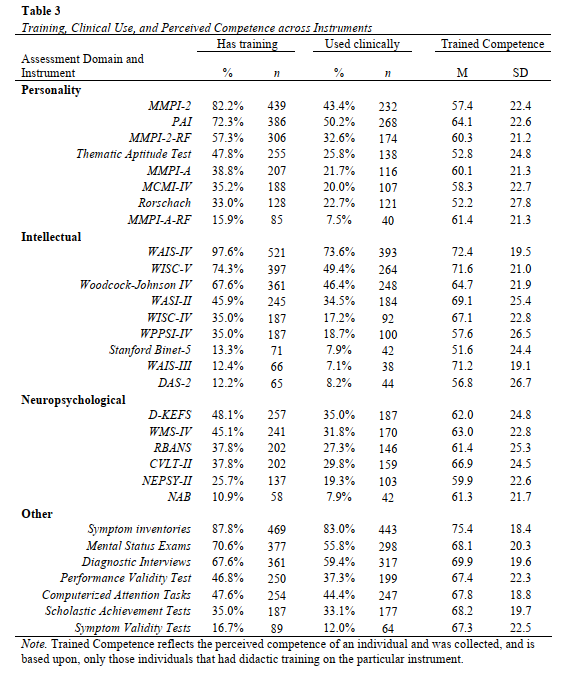

Training in Assessment: Current State and Future Directions

About a year and a half ago I launched (and subsequently published) a pilot project with Drs. Adam Schmidt and Matt Cribbet to look at some trends in training for psychological assessment by asking trainees themselves about their experiences. This week, the first paper from the large scale study that followed-up on that pilot based on the feedback we received was published in Training and Education in Professional Psychology (TEPP) in a new article, this time using a national sample of 534 doctoral trainees from across the country in health service psychology programs. GET THE PDF HERE

In short, our results suggest that (i) the patterns of training coverage mirror the instrument use patterns of psychologists who are currently in clinical practice, (ii) students receive more frequent didactic and classroom exposure during training than practice opportunities with clients, and that (iii) program types [PhD,PsyD] and program areas [Clinical, Counseling] are generally similar in their coverage. Lets break down what this means to me, as a researcher and professional working to ensure appropriate and effective psychological assessments.

- It’s good to see training conform to what professionals do, in general. This means that students will likely be ready to step into similar professional roles as those we currently see existing.

- Having the same content across programs means that in a way, the field has converged on what is the standard of content coverage. That doesn’t mean that all content is covered the same, or that all programs are equally good at training, but it is a good starting point for ensuring high levels of client care.

- There are some things that students don’t get a lot (or enough) training on. One is structured diagnostic interviewing with 25% of students not having training / or not knowing they were trained (which is just as bad). Another is with symptom validity testing. Response styles are a major part of ensuring appropriate interpretations are made, so not being prepared to assess invalid responding means not being ready to appropriately make diagnostic decisions with the aide of assessments. This is a huge issue for the VA, and for veterans since 50-70% produce invalid symptom response styles on the MMPI (Ingram et al., 2019), making sure that isn’t characterized is critical to what treatment recommendations come next. Good care starts at good training. Say it with me.

- Students get lots more classroom time than hands on learning, but hands on learning is when the complexity and nuance of this advanced integrative skill come into play. To advance training, there has to be more clinical use – not just class coverage.

There was a lot more to unpack from the article, but those were a few of my reflections. I’m looking forward to where we go next as a research team on this, and am excited to see the work related to performance-based competency and factors impacting use and intention to work with psychological assessment.

MMPI-2-RF and MMPI-3 : Implications on treatment engagement

Nicole recently presented research summarizing a paper we have out for review examining the utility of the MMPI-2-RF and MMPI-3 to predict treatment use and treatment-related attitudes in a short-term longitudinal sample of individuals with moderate to severe depressive symptoms. This was presented at the 2020 MMPI symposium and the PowerPoint may be FOUND HERE [CLICK]

Abstract

Literature surrounding the MMPI-2-RF has started to demonstrate convergence about which scales best predict treatment engagement and outcomes; however, it is also limited in several ways. For instance, externalizing scales frequently emerge as indicators of treatment dropout (Anestis et al., 2015; Mattson et al., 2012; Tylicki et al., 2019); however, is not always true (Arbisi et al., 2013; Tarescavage et al., 2015). Moreover, studies have frequently focused on outcomes using clients who have already initiated treatment across different settings, while only one has examined the capacity of the MMPI-2-RF scales to predict treatment initiation (Arbisi et al., 2013). As such, there is notable variability in scale-specific findings as well as in the type of behaviors that are predicted on the MMPI-2-RF. In addition, the soon-to-be released MMPI-3 underscores the necessity of establishing a similar research base for this new instrument. While many of its scales are based on existing MMPI-2-RF scales, the new and revised scales have yet to undergo extensive validation. This is the first such study which examines treatment use and engagement among those who would likely benefit from those services but who are not recruited from treatment locations (e.g., some are in therapy and some are not).

Big Takeaways

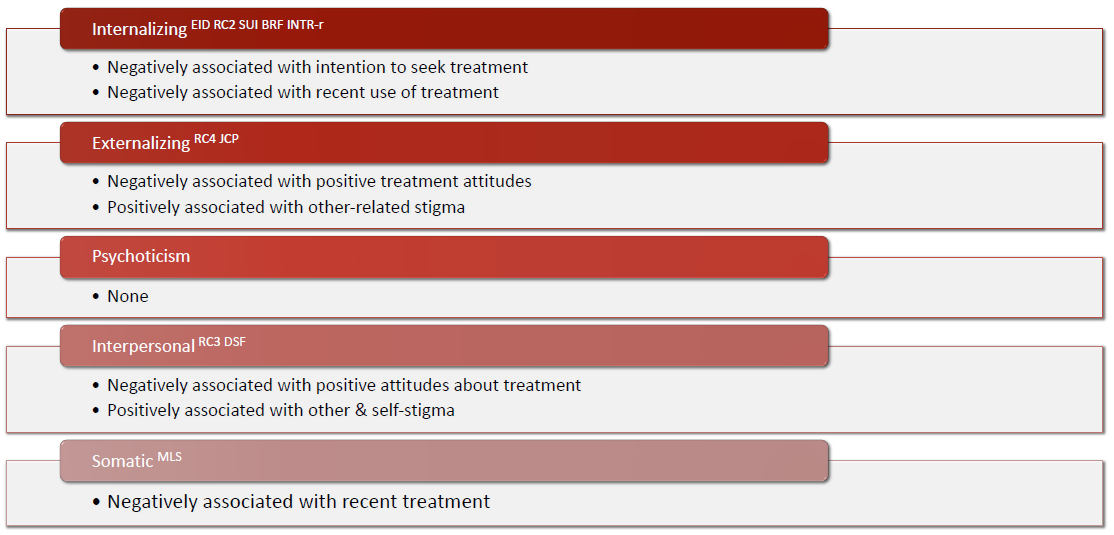

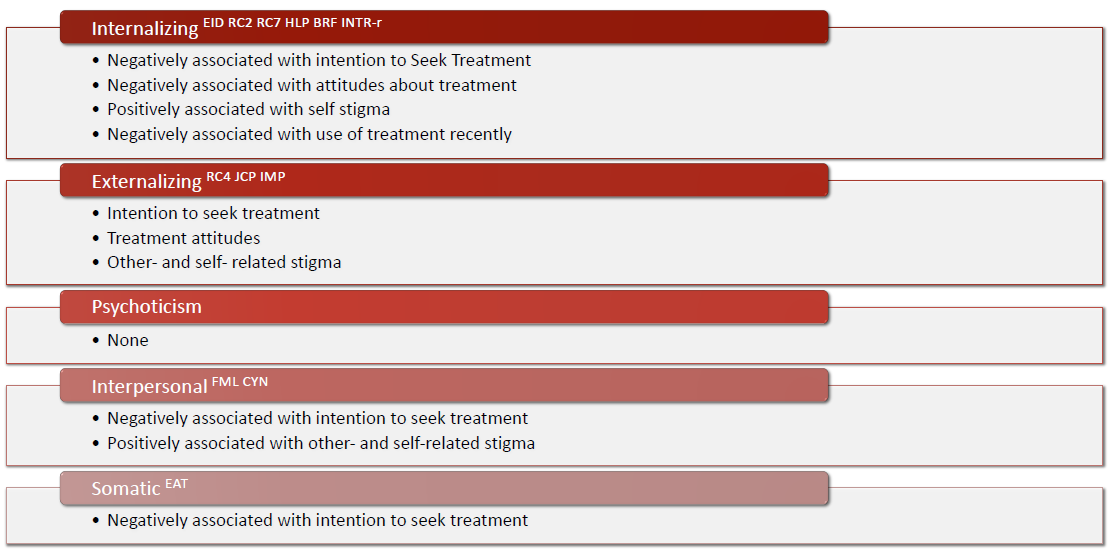

- The MMPI-3 has stronger and broader relationships among criterion measures, suggesting probable improvement over the MMPI-2-RF scales.

- Measures within the internalizing and interpersonal domains are the strongest and most predictors, and externalizing scales are also useful.

An overview of general scale-related findings are summarized below:

MMPI-2-RF

MMPI-3

Lab Diversity Statement

This is a difficult time for our society, as well as for our ourselves, our clients, and our communities. This year has posed substantial challenges to those within the United States, as well as those around the world. Most recently, the #BlackLivesMatter movement laid bare once again the difficulties that many individuals in our department, community, and country continue to face. In times such as these, it is important to me that I express my unwavering support for this movement, and for the causes it represents. The tragic deaths and the resulting protests are a direct result the of the historic and systemic unequal treatment of racial and ethnic minorities. George Floyd, Ahmaud Arbery, Breonna Taylor, Eric Garner, and Philando Castile represent only a fraction of the violence against minorities in the United States now, as well as over our 400-year history. Black individuals and communities, as well as other minority groups, deserve equality. They deserve safety and freedom from oppression. A core mission of counseling psychology is for the advocacy of mental health and human welfare. Thus, it is important that we, united as a lab and academic community, stand firmly against racism, discrimination, and inequality. The PATS lab is in solidarity with #BlackLivesMatter, and with all others who advocate for equality and justice. As a pillar of counseling psychology, we support and advocate for social justice and social change. I am proud to do so. We will continue to do so.

While these times are challenging, I am also encouraged by the recent ruling from the Supreme Court of the United States extending Title VII of the Civil Rights Act of 1964 to gender identity and sexual orientation. This ruling is a landmark decision which clarifies what we already knew as a profession – that all individuals are worthy of love, deserving of respect, and of equal value. Unfortunately, this monumental victory also comes in the wake of protections being rolled back to our LGBTQ+ community in health care settings. This is heartbreaking outcome to our lab considering we directly work to improve, implement, and disseminate research that supports clinicians providing empirically supported and diversity informed treatment. To this end, while also mourning the ongoing tragedies of racial and ethnic injustice, we celebrate Pride Month and the progress made during it. Positive steps in social change underscore our belief that change is happening, and that progress is possible. This change, and the beliefs espoused here, are central to our lab identity, culture, and mission

New Paper on the MMPI-2-RF

Recently accepted to Spirituality in Clinical Practice. Pre-print coming soon.

Effective screening and psychological assessment is critical for identifying and screening out clergy candidates which may be inappropriate for these callings, based on psychopathology, addictive behavior, emotional immaturity, personality characteristics incongruent with effective ministry, or deviant sexual interests and behaviors. Emotional deficits such as avoidance of negative emotions and inability to cope with negative affect have been identified as known risk factors for various problematic behaviors for which psychological assessment of clergy applicants are intended to identify (e.g., sexual offending). Likewise, a preponderance of negative emotions (i.e. anger, impatience, irritability, and resentment) are regularly found in clergy credibly accused of sexual misconduct.

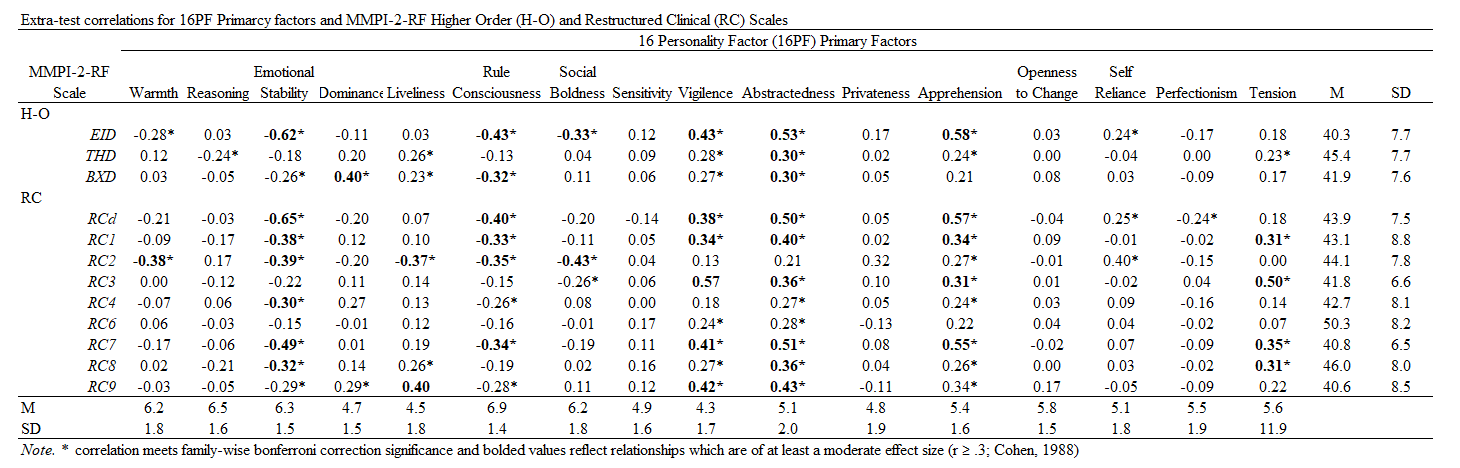

In clergy applicants (n= 137) undergoing psychological assessments as part of their evaluation for a diconate formation program between 2013 and 2019, correlational relationships between 16PF and MMPI-2-RF scale scores were examined. Partial reults are presented below.

Significant relationships were most pronounced between 16PF global factors of Anxiety and Self-Control and the MMPI-2-RF scales of emotional (e.g., inefficacy, self-doubt, anxiety) and interpersonal dysfunction (e.g., social avoidance, family problems), consistent with an emotional deficits hypothesis. Behaviorally disconstrained relationships were less pronounced and irregular, perhaps owing to the low rate of clinically-elevated scores and the restricted range of scores due to defensive responding, as evidenced by high K-r/L-r scores. Thus, rather than focusing on egregious problematic behavior that is likely minimized or hidden in an admission context, psychological evaluations may be more effective by focusing on problematic emotional states as well as candidates’ ability to manage stress and cope with challenges (e.g., Baer & Miller, 2002). Based on results observed within this study, the MMPI-2-RF appears well-suited to this task.

Depression in Men, KU press release on work with collaborator Brian Cole

Last year Brian Cole (Assistant Professor of Counseling Psychology and Director of Training at the University of Kansas) and I had an article examining the ways that stigma and masculinity predict different types of treatment seeking behaviors. KU had a news release today on his work on men’s depression, which includes our PAPER [click here for a pre-print version].

It’s always cool to see work with colleagues get spotlighted. I can’t wait to do more with him in the next project on depression help seeking.