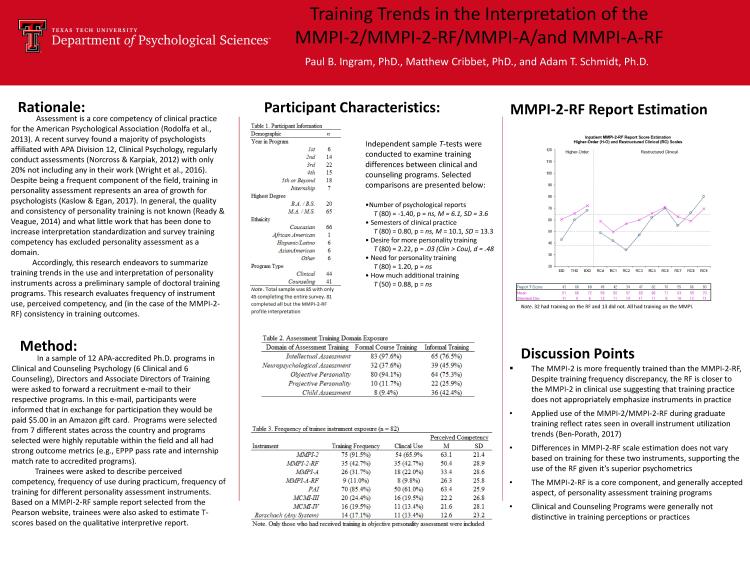

Some colleagues and I here at Texas Tech are taking a look at training trends in assessment (Click me for the presentation PPT) and the implications that has for the validity of instrument use. After all, if scales are valid indicators of their intended constructs but are not used appropriately or consistently (especially during graduate school when supervision and feedback is the highest/the material the most fresh) then there should be concerned about how this translates into practice, subsequent to graduation. The poster being presented in two weeks is taking a look at personality instruments specifically and there are a few things to note:

- Clinical and Counseling Psychology don’t differ on a majority of training variables (either perceived or actual, skill-based assessment outcomes). Partial results from this are reported in the poster. Assessment is a vital domain of psychology and this supports my position that counseling psychology is just as ready to do it as anyone else in applied psychology

- Frequency of use for personality instruments in training settings mirrors those observed in instrument sales, suggesting practicum is an important part of the training in assessment. Accordingly, courses do not reflect this same pattern and are not likely to prepare people for the instruments they are likely to encounter (e.g., MMPI-2 versus MMPI-2-RF rates).

- Skills in the interpretation of the MMPI-2 hold over fairly well into the MMPI-2-RF, with no significant differences between narrative profile interpretation or symptom frequency (unreported here) estimation.

- Most individuals completed the entire study, but a sizable portion quit the moment they were asked to interpret personality measures. This is not an issue of data missing completely at random and suggests that there is a distinctive reaction by graduate students when they see personality assessment tasks. I suspect its tied to competency and self-perceptions of under-preparedness. Conclusions about how stable objective personality measure interpretations are is greatly limited by this sizable, self-selected exclusion by participants.

- There are substantial variations in training patterns with regards to what is reported in personality testing instruments for objective personality measures. As neuropsychological assessment is doing, there should be a more standardized practice of reporting results so as to improve personality assessment.

Disclaimer: Data here represents a preliminary analysis of the project and cover only selected thematic results. Results here are consistent with those seen in the full sample. Full results are being prepared for paper and will follow in a subsequent post in the future.

Personal Impression from results: As a field, we cannot focus exclusively on scale validity/treatment efficacy/etc. to ensure that training of those topics is sufficient. There needs to be an APA-led initiative on training standards so that we can ensure fidelity and adhere to our role as social scientists.